McGill University

Computer Science Dept.

TechnicalQuake

A Project for CS767 - Non-Photorealistic Rendering

McGill University

|

TechnicalQuakeA Project for CS767 - Non-Photorealistic Rendering Michael Batchelder and Kacper Wysocki |

Winter 2005 |

Non-Potorealistic Rendering is a very wide field. Often it is associated with techniques created with the intent to mimic human artists. They may emulate various painting styles (such as impressionism or pointillism) or they might give the digital artist a more interactive tool to create images after a certain medium such as water color or stippling. In other instances they create a new medium, such as image mosiacs. NPR, however, does not stop there. There are areas of study that are more technical in nature.

There has been a fair amount of research in the field of technical illustration and rendering. Specifically, computers can be used with 3D models to produce images that are easier to understand than those produced by the human hand. Some of the common effects that have proven useful include silhouette enhancement, non-photorealistic shading and lighting to better potray shape and contour, and transparency.

Most of the research in this area has been applied to static scenes but as computers become more powerful there is an opportunity to take these techniques into a real-time realm. Imagine, if you will, the ability to perform a "walk-through" of a 3D technical model - whether it be an engine block, a silicon chip, or a building - in which features have been enhanced so that you better understand the scene.

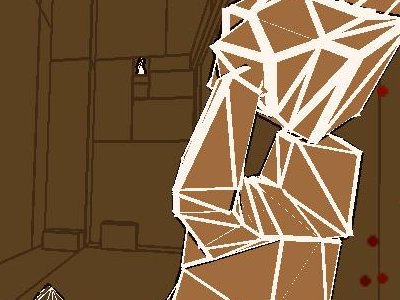

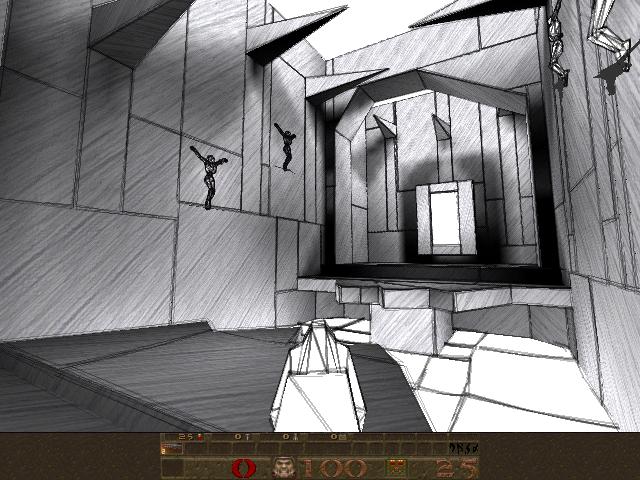

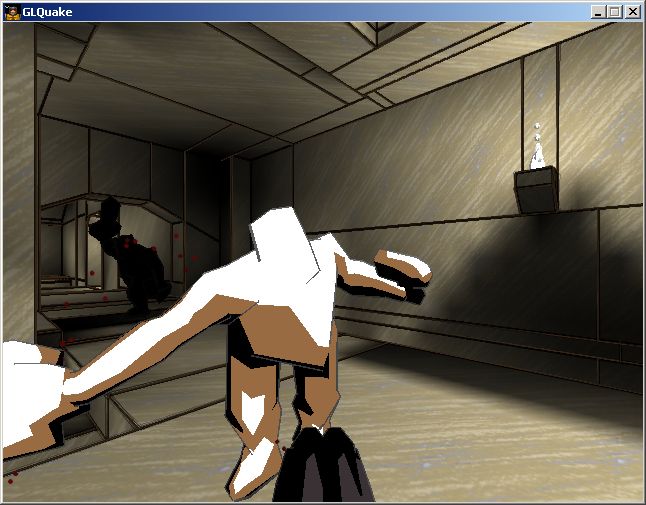

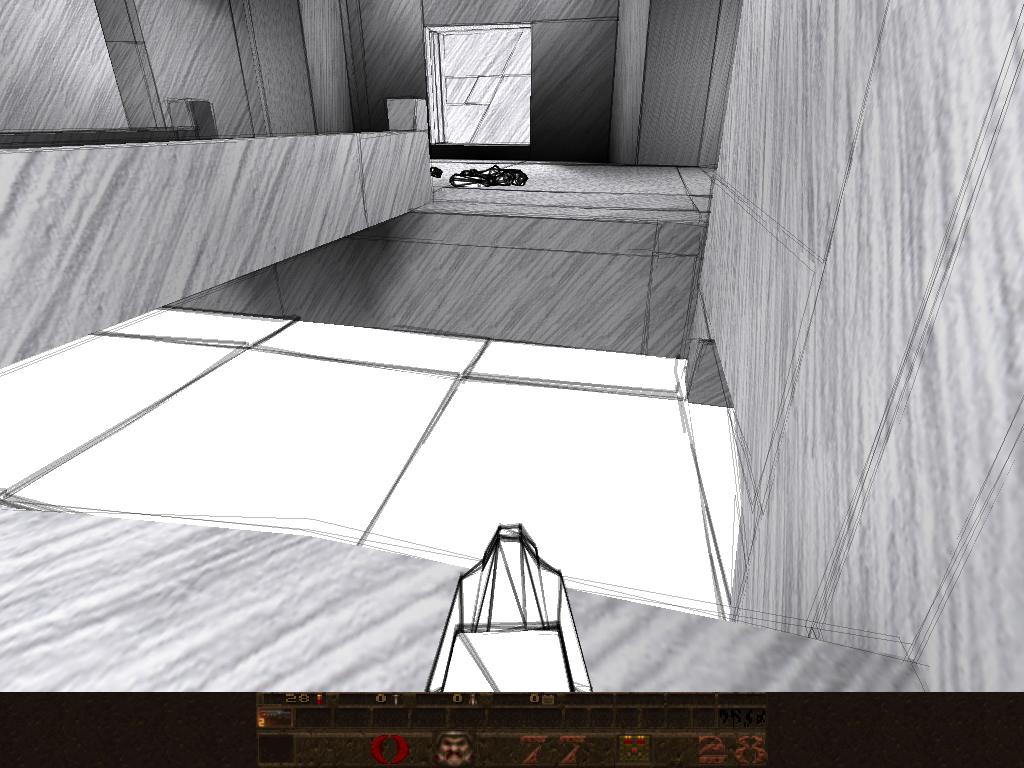

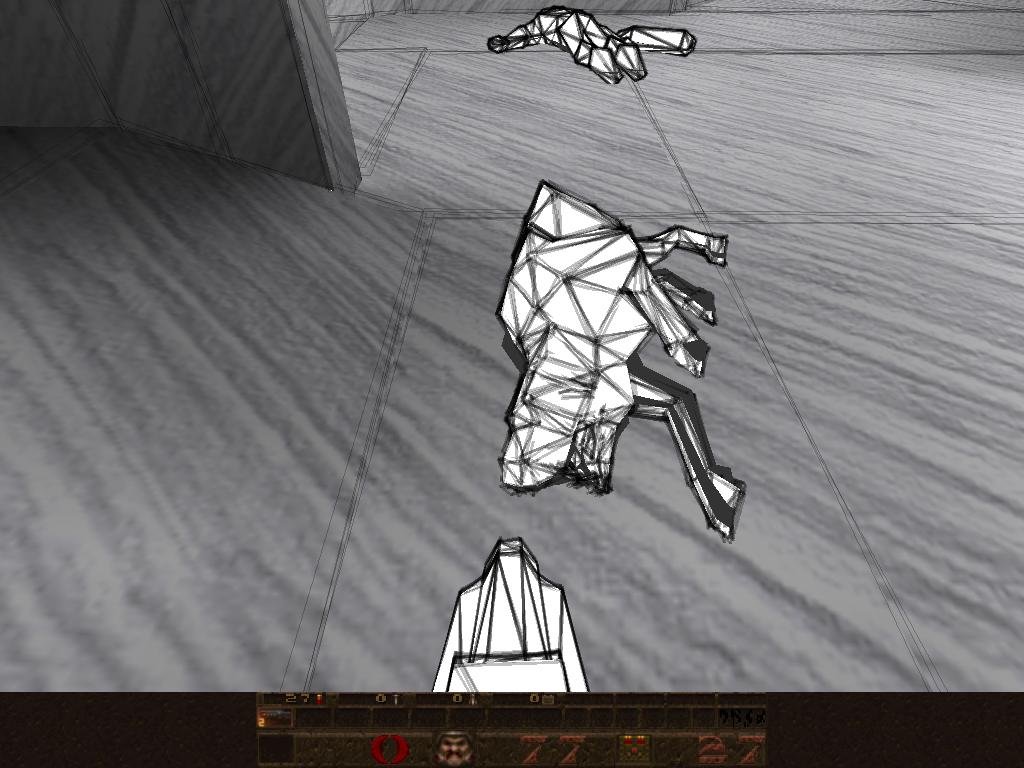

This project's aim is to bring some of these technical enhancements to a 3D real-time engine, along the lines of Gooch, et al [1][3] and Rasker [2]. Specifically, we have chosen the Quake 3D engine [5] provided by ID Software as our rendering tool. It uses OpenGL to render sets of polygons to the screen, often applying texture maps to these polygons to simulate various surfaces. We will use the NPRQuake [4] extension to the Quake engine, which abstracts the drawing calls out of the engine, making it easy to implement new rendering styles. NPRQuake has already been used to produce a number of styles including a pencil sketch style [4] and a cartoon style [6] (see images below). As in these styles, it is our goal not to produce any technical models, but to use the very maps and models provided by the Quake game to produce a visually improved 3D world in which features such as edges, creases, and contours are more obvious and easily understood.

There is more than one way to skin a cat, however. Many enhancements can be accomplished in different ways, each with their pros and cons. Sometimes the result is better but the performance (such as frames per second) is worse than another approach. In this project we look closer into these various techniques and compare them.

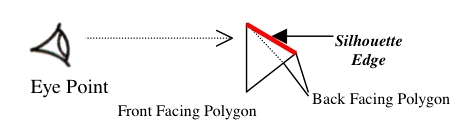

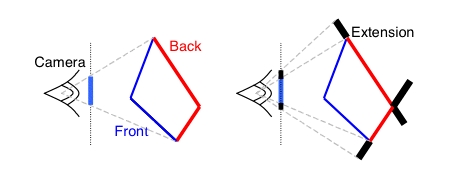

By enhancing (either by darkening or widening the edge) silhouettes of 3D objects in a scene, the viewer can more easily understand the shape and borders of the objects. This can be accomplished in more than one way. Firstly, if the rendering engine has information about the neighbouring polygons of a given edge, then it can simply test if one polygon is front-facing and the other is back-facing (Fig. 1) and draw an appropriate silhouette line of the desired width.

However, if neighbouring information is not available then a different technique of growing back-facing polygons can be used. Basically, it involves increasing the size of each back facing polygon by the amount of width one requires for the silhouettes (Fig. 2). This can be accomplished by drawing a quad polygon extension to each edge of the back-facing polygon. It is worth noting that you can ensure a uniform silhouette width by varying the quad extension based on the back-facing polygons orientation with respect to the camera (viewers) position.

Since the Quake engine does not have any neighbouring information the second method, that of growing back-facing polygons, is the only real option unless neighbouring information is determined through some sort of pre-processing. We plan to look at both methods to determine their advantages and disadvantages with respect to eachother.

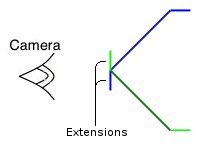

Not all edges of a model are silhouettes. There can be angular edges between the polygons that makeup the model that fall on the "front" of the model based on the viewer's perspective (Fig. 3). These edges, often called creases, can be enhanced in the same fashion as silhouettes - by adding extension quads, only this time they are added at an offset angle so that if two polygons are connected at a very shallow angle (they are almost on the same plane, and therefore should not have attention called to their edge) their extensions will be hidden by eachother, or by using the neighbour information obtained through pre-processing to draw a crease line. Of course here we have the opportunity to call attention to the fact that these edges are different than silhouettes so we might choose to color or weight them differently.

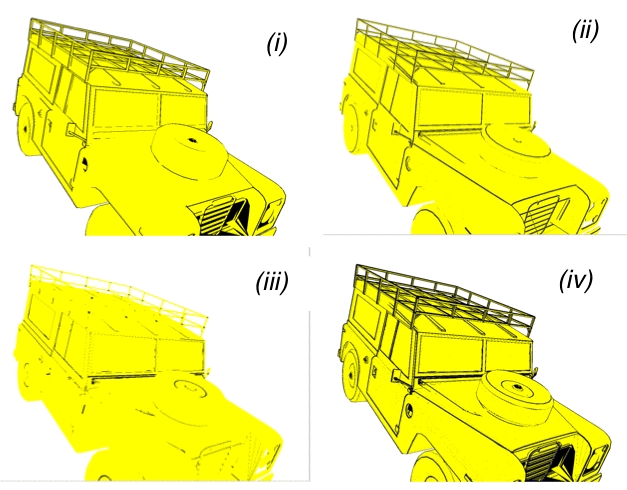

Creases are, in fact, not all alike. Sometimes an edge is at the "peak" of two polygons, like the ridge of a mountain from a bird's eye view (as in Fig. 3). Other times, an edge is at the "valley" of two polygons, like the valley between two mountains. Here, again, is a perfect chance to further enhance the viewer's understanding of the image by differentiating between the two using different weights or colors. Figure 4 is an image from Rasker [2] in which silhouettes (i), ridges (ii), valleys (iii), and the combination of the three (iv) is shown for a model of a rover.

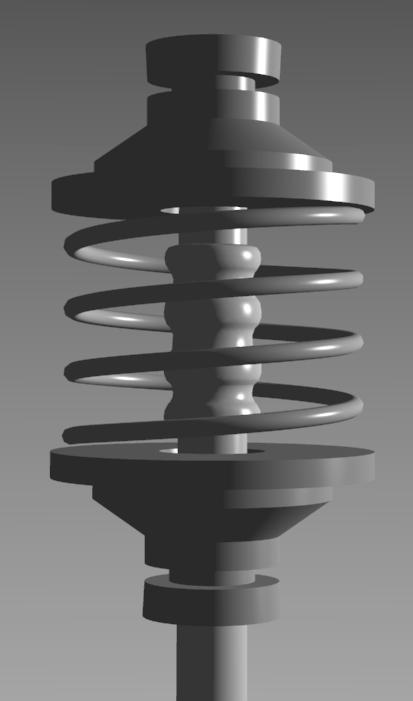

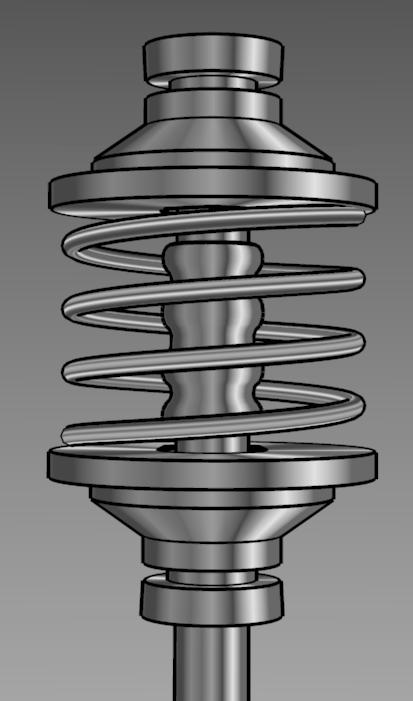

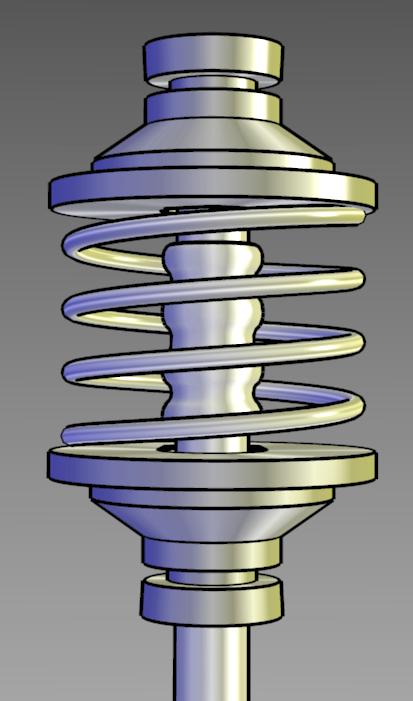

In addition to drawing edge lines and highlights, we must shade the surfaces of objects as well. The particular choice of shading can effect how well a model can be understood. Take for example the various shading models in Figure 5, from Gooch [3]. The first image is a traditional phong-shaded model, the second image is shaded with Gooch's new metal shading and has edges enhanced, and the third image uses Gooch's new metal shading with a cool-to-warm shift. Clearly it is easier to see detail and shape in the second image compared to the first, and the third compared to the second.

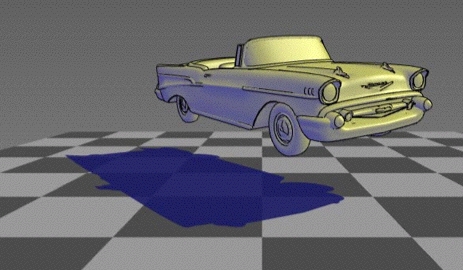

Finally, there are shadows to consider. Shadows can provide a lot of information about a scene, including information otherwise unavailable. Consider a model of a regular tube-based television versus a plasma screen viewed from directly in front of it. Depending on the lighting direction, one could see a shadow that suggests the depth of the object (the normal tube-based television would obviously be much larger in depth but without a shadow, the viewer would not necessarily know this). For this reason, the best shadow to use in this case is often times a single hard shadow, with no blending at it's edges (Fig. 6). This way, the edges of the shadow are clearest, as opposed to a soft-shadow created from blending.

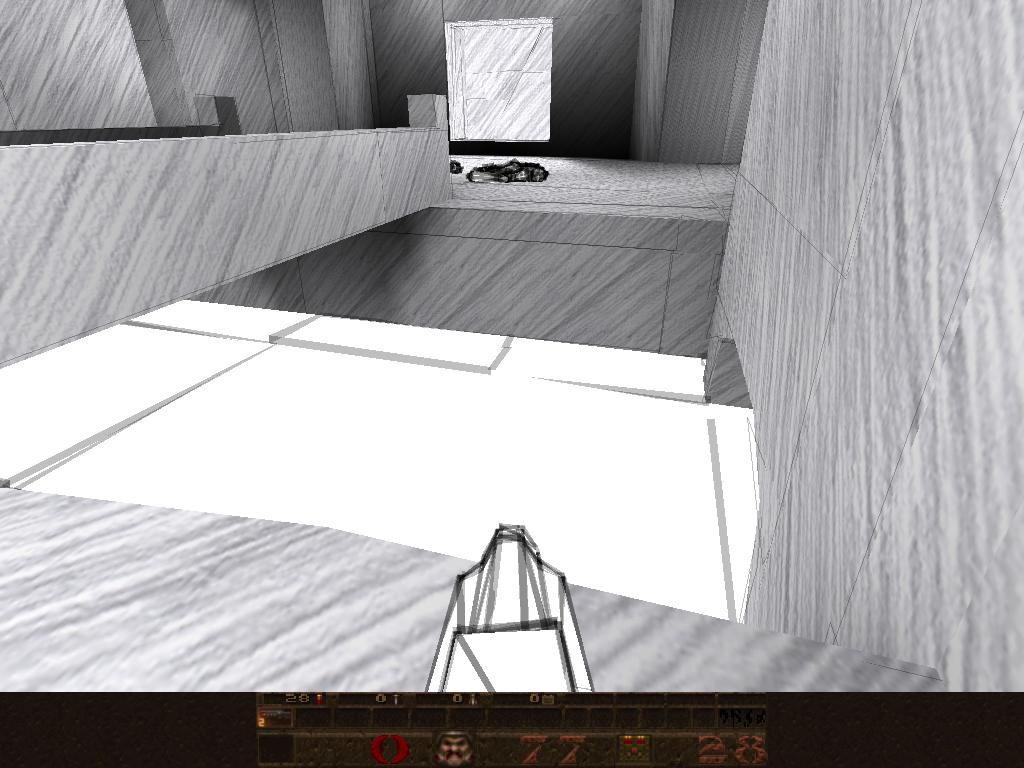

One line per silhouette/crease, thin.

The scene isn't as jittered, but silhouettes and creases are very indistinct.

Eight lines per silhouette/crease, thin.

Creases are pronounced, but provide little fine detail.

One line per silhouette/crease, thick.

We see lots of aliasing. Creases and silhouettes are unpronounced and hard to find.

Eight lines per silhouette/crease, thick.

Creases are distinct, but do not suggest shape accurately enough.

Crease detail.

Drawing multiple jittered lines makes a cool sketchy effect while losing detail.